Dusty: A Low-cost Teleoperated Robot that Retrieves Objects from the Floor

People with motor impairments have consistently ranked the retrieval of dropped objects from the floor to be a high priority task for assitive robot. Motor impairments can both increase the chances that an individual will drop an object and make recovery of the object difficult or impossible. In a study we conducted, motor impaired patients reported dropping objects an average of 5.5 times a day. The presence of a caregiver led to a recovery time of approximately 5 minutes, while the absence of a caregiver could delay the recovery as long as two hours. To meet this need, we have been developing an inexpensive teleoperated robot that is able to effectively retrieve dropped objects since 2008. We call this robot “Dusty”.

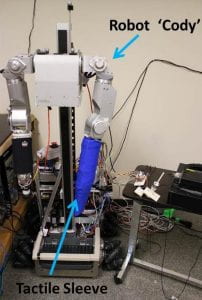

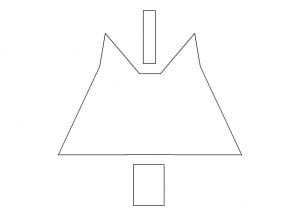

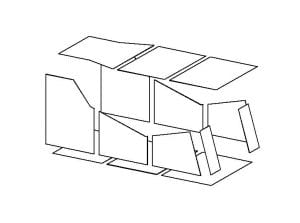

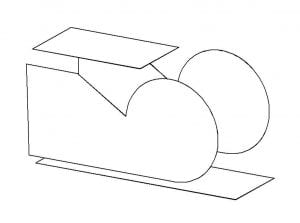

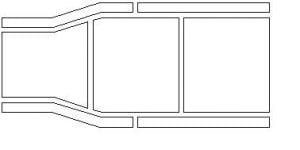

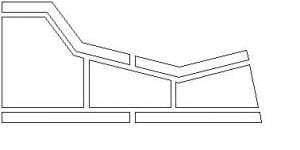

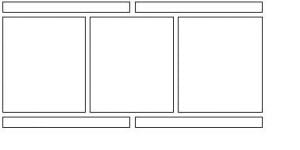

Dusty II

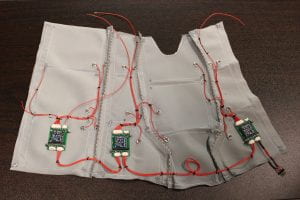

Dusty now is in its second generation. For convenience, we are still using iRobot Create as its mobile platform. Its new end-effector is able to pick up almost any household object under one pound. Dusty is controlled by a wheelchair joystick. User can easily fetch an object from the floor by driving the robot to a position roughly in front of the object and pressing a button. Dusty will autonomously move forward and grasp the object into its end-effector. Then the user can navigate the robot back, press the lift button, and Dusty will lift the object to a comfortable height for the user.

We are currently doing user study with ALS patients. The results look very exciting, and we are going to release the data very soon.

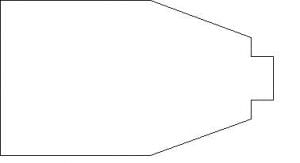

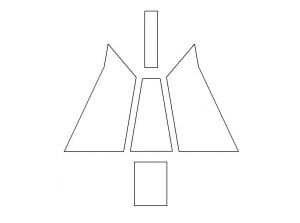

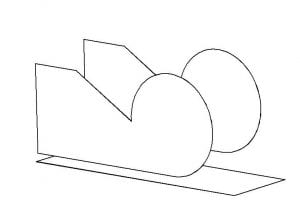

Dusty I

Robot Dusty was initially inspired by dust pan and kitchen turner, that’s why we name it “Dusty”. Although Dusty I was not an functioning robot prototype, but we were able to test the robot’s noval end-effector. This end-effector, which was later modified and used in Dusty II, was already able to grasp 34 objects from the prioritized lists[*] on 4 types of floors, with a success rate of 94.7% (The new end-effector of Dusty II has a success rate over 97%, in a much larger area).

Publications

- Dusty: An Assistive Mobile Manipulator that Retrieves Dropped Objects for People with Motor Impairments, Chih-Hung King, Tiffany L. Chen, , Jonathan D. Glass, and Charles C. Kemp, Disability and Rehabilitation: Assistive Technology, 2011

- Dusty: A Teleoperated Assistive Mobile Manipulator that Retrieves Objects from the Floor, , Chih-Hung King, , and Charles C. Kemp, International Symposium on Quality of Life Technology, 2010. (Project Webpage)

- 1000 Trials: An Empirically Validated End Effector that Robustly Grasps Objects from the Floor, Zhe Xu, Travis Deyle, and Charles C. Kemp, IEEE International Conference on Robotics and Automation, 2009

[*]:

- A List of Household Objects for Robotic Retrieval Prioritized by People with ALS, Young Sang Choi, Travis Deyle, and Charles C. Kemp, arXiv.org, 0902.2186v1, 2009

- A List of Household Objects for Robotic Retrieval Prioritized by People with ALS, Young Sang Choi, Travis Deyle, Tiffany L. Chen, Jonathan D. Glass, and Charles C. Kemp, International Conference on Rehabilitation Robotics, 2009